Project

GPUFleet

Discrete-event GPU scheduler simulator for ML training workloads.

Python Discrete-Event Simulation GPU Scheduling ML Infrastructure

Simulates mixed ML workloads on heterogeneous GPU clusters and compares FIFO, Bin Packing, Cost-Aware, and Priority scheduling across utilization, cost, latency, fairness, and throughput. Deterministic, reproducible, and fully traced.

Built while exploring GPU scheduling tradeoffs for AI infrastructure, informed by prior work reducing a projected $6.5M AWS trajectory to $1.5M. Read the case study →

The problem

GPU clusters are expensive to operate, and scheduling strategy has a direct impact on utilization, cost, and job latency. But the tradeoffs between strategies are poorly understood because they depend on workload mix, cluster topology, and placement constraints.

GPUFleet provides a controlled environment to test these tradeoffs. Run the same workload through different schedulers, compare the outcomes, and inspect the decision trace to understand why.

Approach

A discrete-event simulation engine processes job arrivals, scheduling decisions, and completions using a min-heap event queue. The engine jumps between meaningful events rather than iterating through empty time. Time-stepped simulation becomes expensive and introduces artificial scheduling artifacts under sparse workloads; a discrete-event model keeps complexity proportional to actual system activity while preserving correctness.

Four pluggable scheduler strategies compete on the same synthetic workload. Each produces a full decision trace (JSONL) recording every scheduling pass: what jobs were pending, what placements were feasible, and what the scheduler chose and why.

- FIFO

- First-come first-served, first-fit placement. Simple baseline.

- Bin Packing

- Largest jobs first, pack onto fullest nodes. Reduces fragmentation at the cost of latency.

- Cost-Aware

- Evaluates all feasible placements, chooses the cheapest. Advantage grows with GPU cost variance.

- Priority

- High-priority jobs first. Maximizes throughput but starves low-priority work.

Results

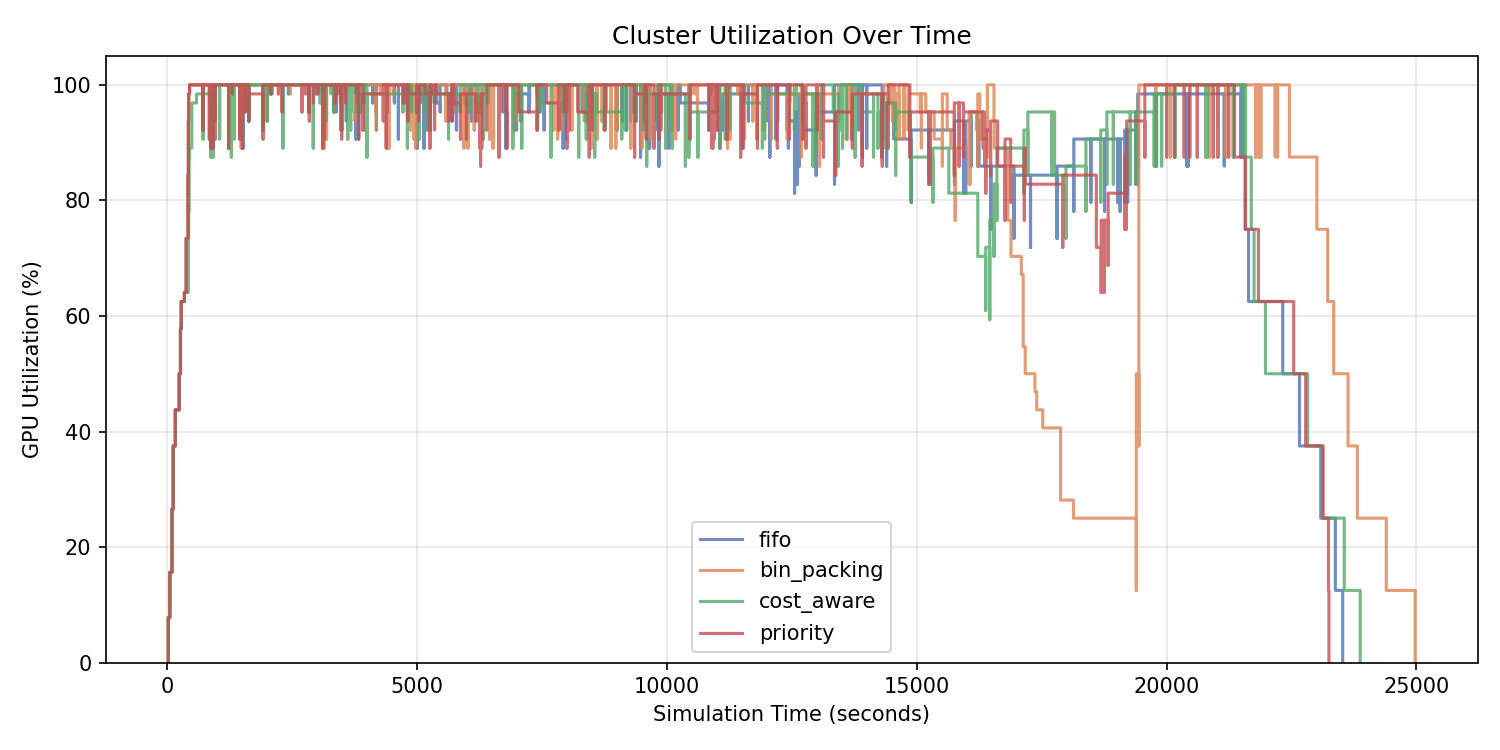

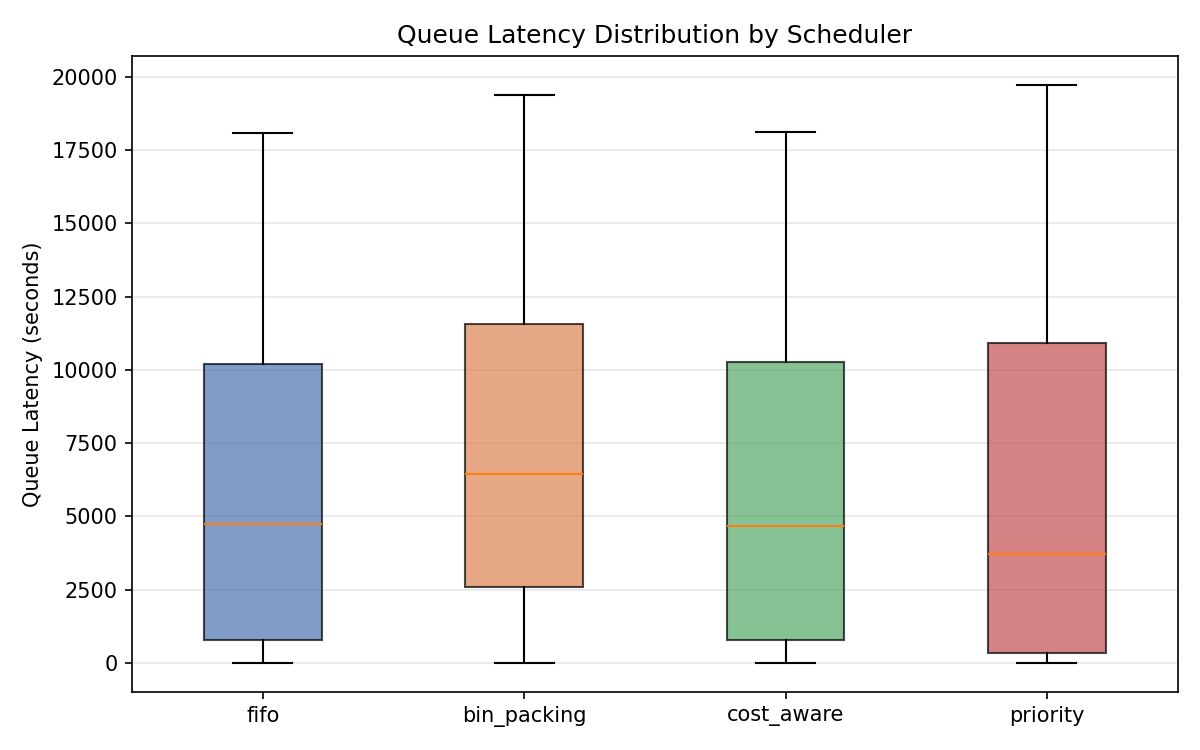

Showcase scenario: 64 GPUs (8 nodes, mixed A100 + H100), 150 jobs with Poisson arrivals, 50% same-node placement requirement.

| Metric | FIFO | Bin Packing | Cost-Aware | Priority |

|---|---|---|---|---|

| GPU Utilization | 91.7% | 86.3% | 90.3% | 92.7% |

| Total Cost | $1,991 | $2,003 | $1,994 | $1,983 |

| Mean Latency | 6,206s | 7,466s | 6,302s | 6,083s |

| p95 Latency | 17,391s | 18,822s | 17,504s | 18,159s |

| Fairness (Jain) | 0.537 | 0.622 | 0.543 | 0.491 |

| Makespan | 23,505s | 24,955s | 23,855s | 23,229s |

No scheduler dominated. Priority delivered the best throughput and lowest mean latency, but at the cost of fairness. Bin Packing improved fairness by preserving larger contiguous placements, but increased latency. FIFO remained the simplest middle ground, while Cost-Aware behaved similarly to FIFO because placement constraints dominated cost differences in this scenario.

Key findings

Fragmentation dominated cost policy in this scenario.

Cost varied by only ~1% across schedulers ($1,983 to $2,003), while utilization moved by 6.4 percentage points and fairness varied materially (0.491 to 0.622). Under heavy same-node constraints, placement feasibility mattered more than cost optimization. Cost-aware scheduling becomes more meaningful once jobs are easily placeable and GPU price variance is higher.

High utilization does not imply efficient scheduling.

The cluster reported >90% utilization while simultaneously exhibiting stranded capacity. GPUs were busy, but unusable for waiting jobs due to fragmentation. Utilization alone is a misleading health metric.

Scheduler policy determines how tradeoffs are expressed, not whether they exist.

All four schedulers completed the full workload, with cost, makespan, and utilization in a relatively narrow range despite meaningful differences in fairness and queue latency. Arrival rate and same-node constraints shaped outcomes more than policy choice here. Systematic parameter sweeps would be needed to generalize further.

Priority improves responsiveness at the cost of starvation.

Priority had the lowest mean latency (6,083s) but the worst Jain fairness index (0.491). Low-priority jobs accumulated severe queue times. This mirrors a common pattern in systems like Kubernetes and Slurm: priority improves responsiveness for urgent work but can starve lower-tier workloads under contention.

Utilization over time

Priority and FIFO keep the cluster busier for longer, but the utilization chart alone hides fairness and fragmentation effects. Higher sustained utilization does not automatically imply better scheduling outcomes.

Latency distribution

Priority lowers median latency but creates the worst fairness score, showing how responsiveness for urgent jobs can come at the cost of starvation for lower-priority work.

Why FIFO stalls despite free GPUs

Every scheduling pass is recorded as a JSONL record. This makes it possible to answer questions like "why did FIFO fragment here?" with concrete evidence rather than intuition.

FIFO at t=12,399s: 5 GPUs idle, 4 jobs waiting, 0 placed

{

"schema_version": "1.0",

"time": 12398.6,

"scheduler": "fifo",

"cluster_state": {

"total_gpus": 64,

"idle_gpus": 5,

"idle_gpus_by_node": { "node-5": 1, "node-6": 4 }

},

"candidates": [

{

"job_id": "job-15",

"feasible": false,

"reason": "requires 8 GPUs with >=40.0GB; only 5 available"

},

{

"job_id": "job-25",

"feasible": false,

"reason": "requires 8 GPUs with >=40.0GB; only 5 available"

}

],

"decisions": []

} 5 GPUs scattered across 2 nodes. The waiting jobs shown here each need 8 with same-node placement. No feasible placement exists. In constrained GPU clusters, nominal free capacity and schedulable capacity are not the same thing.

Outputs

comparison.json— stable structured metrics for all schedulersdecision_trace_{scheduler}.jsonl— per-pass decision logsreport.md— markdown comparison summary- PNG charts — utilization, queue depth, latency, and cost

Stack

Python 3.10+ · heapq-based discrete-event simulation · matplotlib for charts · PyYAML for scenario config · stdlib-only simulation core for deterministic behavior · 124 tests, 98% coverage